One night, a woman is examined in the emergency department complaining of vertigo. Her physician orders a CT scan, and when the tests come back negative, he diagnoses her with a benign inner ear condition and sends her home. He never sees her again.

What he never learns is that her dizziness was far from benign. The next day her face is crooked, her speech is slurred, and she can't move her left arm or leg — the telltale signs of a stroke. She is rushed to another hospital, but because her condition wasn't caught earlier, she suffers long-term disability.

How often do missed diagnoses like this occur at a given hospital? What is "good" performance in stroke diagnosis for dizzy patients? Are certain groups of patients at greater risk of a diagnosis-related harm? What are the opportunities for improvement?

Today, hospitals have little clue. Employees might hear about diagnostic mistakes from morbidity and mortality conferences, malpractice cases or hallway scuttlebutt. However, they don't have valid, routine measures for their diagnostic performance. And they almost never find out if the patient who suffers an adverse outcome after a missed stroke returns to a different hospital or health system, which happens in about one-third of cases.

In 2015, the National Academy of Medicine (NAM) raised alarms about diagnostic errors in a landmark report, Improving Diagnosis in Healthcare, estimating that these mistakes will affect each of us in our lifetimes. More recent studies suggest there are more than 12 million Americans per year who are misdiagnosed, and more than half a million who die or are permanently disabled as a result. The NAM report made a host of recommendations, such as investing in more research, fostering diagnostic teamwork and developing valid and useful measures of diagnostic performance.

It's that last issue — measurement — that's the biggest immediate barrier to widespread improvement. If we have sound, publicly reported measures of diagnostic accuracy, hospitals will be able to track their performance and compare it against their peers. Knowing that their reputations and reimbursements are tied to getting diagnoses right, they will have incentives to dig into their data and figure out ways to improve. If you had chest pain, would you feel safe going to a hospital that misses more heart attacks than average? Probably not. Until hospitals know that patients and payers have such data, we will struggle to move the needle, because there is no needle to move.

New Grant Aims to Speed Diagnostic Measure Development

But we're working on it. Recently, the Gordon and Betty Moore Foundation awarded the Armstrong Institute's Center for Diagnostic Excellence a two-year, $2.4-million grant to rapidly accelerate the development of diagnostic performance measures and dashboards. Together with colleagues from Kaiser Permanente, we will create and validate new measures for missed sepsis and missed heart attack — complementing an existing measure for missed stroke. We also will develop and operationalize visual analytics dashboards that allow us to track and assess our performance for these three conditions.

To help spread these measures and dashboards nationwide, the Society to Improve Diagnosis in Medicine (SIDM) will serve as an "honest broker" in the grant, by engaging multiple health systems and hospitals in the effort. SIDM will explore hosting a national data repository to permit benchmarking across institutions. Visual analytics tools will help maximize the ability of these organizations to drill down into the details of their performance by the age, sex, race or specific clinical characteristics of patients.

In addition, the Moore Foundation grant will help us to build a "dashboard measures engine," guiding researchers in the development of new measures for diagnosis-related harm. Right now, developing a measure for just one dangerous disease is extremely labor-intensive, requiring special statistical analyses that can take weeks of back-and-forth with data analysts to make each small change, dragging the process out over months or even years. The engine will speed this process, making it easier for researchers or quality and safety officers to explore massive data sets in real time. It will have taken us several years to create the first three measures — for stroke, sepsis and heart attack. Once we have the engine built, I can easily envision a dozen or more new measures for dangerous acute conditions such as pulmonary embolus, aortic dissection and meningitis coming out in a matter of months.

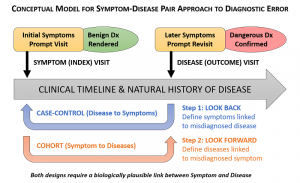

This upcoming work will build on a new method for identifying diagnosis-related harms that we have published recently. Called SPADE — Symptom-Disease Pair Analysis of Diagnostic Error — this method mines databases with hundreds of thousands of patient visits to identify cases in which a patient's diagnosis changes from benign to dangerous over a relatively short period of time. For instance, SPADE can identify the frequency with which patients come to a clinic or ED with a fever, are told that it's a viral infection, but are later hospitalized with bacterial sepsis.

As we explain in a BMJ Quality and Safety article in December 2017, the method is best suited for identifying acute and sub-acute conditions that are missed, and it provides the most accurate results when we are mining patient data across multiple care settings and health systems. Statewide health information exchanges and integrated health systems that track visits to clinics, hospitals and other settings (e.g., Kaiser Permanente) are good examples of such data sources.

Until now, diagnosis-related harms would have to be identified through a labor-intensive review of clinical notes in the medical record — an unsustainable approach for assessing performance at the hospital or health system level, and plagued by problems of poor documentation, hindsight bias and inter-rater disagreements. SPADE allows us to track results in near real time using readily available data and statistically valid sampling techniques and quantitative results.

Leveraging Diagnosis Data for Action

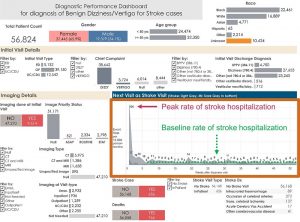

In March we also published a prototypical dashboard — the first of its kind — for stroke misdiagnosis. Richly detailed, it allows users to drill down into the data in search of special patterns or trends, such as examining any differences in outcomes based on race, age or gender. Routine use of such dashboards could be transformational for institutions eager to start solving the diagnostic error problem.

Because adverse events after a misdiagnosis are relatively uncommon — for instance, only about 1 percent of ED patients with complaints of dizziness suffer a more serious stroke after being told it was just an ear problem — the numbers will be too small to provide feedback to individual clinicians. However, these dashboards also collect utilization and process of care data that we can mine for insights and use for such improvement. In the case of strokes, for instance, we might discover that a majority of the missed diagnoses occur when the physician orders a CT scan instead of a MRI. This would make sense, because CTs catch just about 10 percent of strokes in dizzy patients, whereas MRIs catch about 80 percent. Or we might find that missed strokes were much more common in patients who did not get a "tele-dizzy" consult, in which specialists conduct a device-enabled remote bedside exam of minuscule eye movements to catch early strokes in dizzy patients more accurately than MRI.

With these data, we could take action. For instance, we could notify physicians who are high utilizers of CT scans in dizzy patients or low utilizers of "tele-dizzy" services, to remind them of best practices. We could also integrate these elements into standard care pathways and order sets. Then, through continued monitoring, we could track changes in CT scan utilization or the missed-stroke frequency.

Some of my colleagues in diagnostic safety research think that we’re still five to 10 years from having publicly reported performance measures for diagnostic performance. I hope that's not the case. Call me naïve, but I think we could be just one or two years away if the focus and political will are there.

This summer we'll be submitting the first of these measures (rate of excess harms after missed stroke) to the National Quality Forum for possible endorsement as early as 2019. The NQF's endorsement is a stamp of approval that often paves the way for the Centers for Medicare and Medicaid Services to adopt a new measure.

I hope that in two years' time, with the benefit of our work under the Moore Foundation grant, there will be a national movement to actively incorporate diagnostic performance metrics into routine safety monitoring by hospitals, at least as part of clinical demonstration projects targeting improved diagnosis.

Awareness about diagnostic errors is greater than ever. There's uniform agreement in the industry about the need for more research and investment. But until diagnostic performance measures become more widespread, we will have difficulty getting more hospitals, policymakers and others to invest in fixing the problem.

Well finally! Someone is trying to mitigate all the medical "whoops". You are right that until medical feet are held to the fire, nothing will change. ED's live by the " treat em and street em" mantra and most are farmed out to medical staffing companies with no real stake in the people or communities they serve. Missed diagnosis is the driving cause of medical malpractice but in diving deeper into the misses, we observe gross errors recorded in medical records that lead to wrong protocol and plan of care. These errors compound with every F/U visit as most medical records contain 80% error. No, the patient cannot get these errors resolved as that would require an admission of error and the willingness to correct it on the part of the provider. HIPPA states, "IF a provider DECIDES to correct a medical record..." blah, blah, blah. That law needs to change! Even when the medical consumer has proof documented in writing or recorded, they rarely get errors deleted or corrected. And no, the law then States, if all requests fail, then the patients can REQUEST their written correction be included in their file, which may appear as an icon attachment (who is going to click on that, even if it gets noticed?) or the full DISAGREEMENT by the patient may appear and marked as patient DISAGREEMENT. How do you think that gets viewed? What weight does it carry? Not much. It becomes "subjective" (read ignored). The problem with EHR is all these errors are then in an HIE, affecting life and death matters or the ability to get a properly rated insurance policy. I could site nightmare examples from my files, but as long as medicine remains a protected class of citizens/business, I don't hold out much hope for what you are trying to do. Docs protect yourselves and your licenses. Print your patients entire med record, ask them to read and correct it, then take your histories accordingly. Plan care based on facts because someone else's screw up sure is not going to be mine.

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me. https://www.binance.info/ka-GE/register?ref=RQUR4BEO

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

You really know your stuff. Watch kannada news tv9 — live Kannada news, breaking alerts, debates, and regional coverage. Clean player, quick start times, and dependable HD streaming.

The article is very meaningful and worth studying carefully https://zuqiu-cn-qq.com.cn